Deploy a Kubernetes Cluster on AWS

This procedure provides instructions for setting up and configuring a Kubernetes (EKS) cluster on Amazon Web Services (AWS) using Terraform-based deployment scripts.

The goal is to prepare the infrastructure required to install kdb Insights Enterprise, ensuring that:

-

Essential components such as the VPC, bastion host, security groups, node groups, and associated services are provisioned automatically.

-

Both new VPC creation and integration with existing VPCs are supported.

-

Configuration is driven by environment variables and architectural profiles, offering flexibility to support various deployment scenarios.

All scripts are packaged in the kxi-terraform bundle and executed within a pre-configured Docker container, providing a consistent setup experience across environments.

The configuration and deployment of your Infrastructure to support kdb Insights Enterprise should take approx 20 minutes to complete.

Terraform artifact

If you have a full commercial license, kdb Insights Enterprise provides default Terraform modules packaged as a TGZ artifact available through the KX Downloads Portal.

You need to download the artifact and extract it as explained in the following sections.

Prerequisites

For this tutorial, you need:

-

An AWS account.

-

An AWS user with access keys.

-

Access to an Authoritative DNS Service (for example, AWS Route53) to create a DNS record for your kdb IE external URL exposed through the clusters Ingress Controller.

-

CA-signed certificate (cert.pem and cert.key files) for your clusters desired Hostname or a wilcard certificate for your DNS sub-domain, for example, *.foo.kx.com

-

Sufficient Quotas to deploy the cluster.

-

A client machine with AWS CLI.

-

A client machine with Docker.

Important

When running the scripts from a bastion host, ensure ports 1174 and 443 are open for outbound access, or enable full outbound access with a 0.0.0.0/0 security group rule.

Note

-

On Linux, additional steps are required to manage Docker as a non-root user.

-

These scripts also support deployment to an existing VPC (Virtual Private Cloud). If you already have a VPC, you must have access to the associated account to retrieve the necessary VPC details. Additionally, ensure that your environment meets the prerequisites outlined in the following section before proceeding with deployment to an existing VPC.

Prerequisites for existing VPC

A VPC (Virtual Private Cloud) with the following:

-

Minimum of two public subnets with outbound access allowed.

-

Minimum of two private subnets.

-

Public subnet Network Access List needs to allow HTTP (80) and HTTPS (443) from CIDR's that need access to Insights.

-

Bastion host to be used to deploy the terraform code and Insights.

Billable AWS services

The following AWS services incur charges for this configuration:

- Amazon Elastic Kubernetes Service (EKS) — managed control plane enabled with control plane logging.

- Amazon EC2 —

- Bastion instance with a gp3 root volume.

- EKS managed node group instances.

- Amazon Elastic Block Store (EBS) — gp3 volumes attached to the bastion host and EKS node group instances.

- Amazon VPC – NAT Gateway(s) — NAT Gateway hourly and data processing charges (with associated Elastic IPs).

- Public IPv4 (Elastic IP) — billed for assigned public IPv4 addresses (bastion and NAT Gateway allocations).

- Amazon CloudWatch Logs — EKS control plane log group with a 90-day retention policy.

- Data transfer — charges may apply for egress via NAT, inter-AZ traffic, and internet egress.

- Amazon Elastic File System (EFS) – File System

- Amazon EFS Mount Targets (per Availability Zone)

- Elastic Load Balancing (Network Load Balancer) – created by the ingress-nginx Service

Environment Setup

To extract the artifact, execute the following:

Bash

tar xzvf kxi-terraform-*.tgz

This creates the kxi-terraform directory. The commands below are executed within this directory and thus use relative paths.

To change to this directory execute the following:

Bash

cd kxi-terraform

The deployment process is performed within a Docker container which includes all tools needed by the provided scripts. A Dockerfile is provided in the

config directory that can be used to build the Docker image. The image name should be kxi-terraform and can be built using the below command:

Bash

docker build -t kxi-terraform:latest ./config

User Setup

The Terraform scripts require a user with appropriate permissions which are defined in the config/kxi-aws-tf-policy.json file.

Create policy

Bash

aws iam create-policy --policy-name "${POLICY_NAME}" --policy-document file://config/kxi-aws-tf-policy.json

Note

The policy only needs to be created once and then it can be reused.

where POLICY_NAME is your desired policy name.

Assign policy to user

Bash

aws iam attach-user-policy --policy-arn "${USER_POLICY_ARN}" --user-name "${USER}"

where:

-

USER_POLICY_ARNis the ARN of the policy created in the previous step -

USERis the username of an existing user

Configuration

The Terraform scripts are driven by environment variables, which configure how the Kubernetes cluster is deployed. These variables are populated by running the configure.sh script as follows.

Bash

./scripts/configure.sh

-

Select

AWSand enter your credentialsBash

CopySelect Cloud Provider

Choose:

> AWS

Azure

GCPBash

CopySet AWS Access Key ID

> ••••••••••••••••••••Bash

CopySet AWS Secret Access Key

> •••••••••••••••••••••••••••••••••••••••• -

Select the Region to deploy into:

Bash

CopySelect Region

Choose:

> af-south-1

ap-east-1

ap-northeast-1

ap-northeast-2

ap-northeast-3

ap-south-1

ap-south-2

ap-southeast-1

ap-southeast-2

ap-southeast-3

ap-southeast-4

ap-southeast-5

ap-southeast-7

ca-central-1

ca-west-1

eu-central-1

eu-central-2

eu-north-1

eu-south-1

eu-south-2

eu-west-1

eu-west-2

eu-west-3

il-central-1

me-central-1

me-south-1

mx-central-1

sa-east-1

us-east-1

us-east-2

us-west-1

us-west-2

cn-north-1

cn-northwest-1 -

Select the Architecture Profile:

Bash

CopySelect Architecture Profile

Choose:

> HA

Performance

Cost-Optimised -

Select if you are deploying to an existing VPC or wish to create one:

Bash

CopyAre you using an existing VPC or wish to create one?

Choose:

> New VPC

Existing VPCIf you choose

Existing VPCyou are asked the following questions, if selectingNew VPCskip ahead to the next part.Bash

CopyPlease enter the vpc id of the existing vpc:

> vpc-0490ed4841d8f58cf

Please enter private subnet IDs (comma-separated, no quotes):

> subnet-07884998863f2d554, subnet-0e7903b8757f1025

Please enter the Network ACL that is allocated to the public subnets in the existing VPC:

> acl-0c0a778b0b58d53f5

Please enter the security group ID which is attached to the bastion host you are deploying from:

> sg-077098aea8747407e -

If you are using either the

PerformanceorHAprofiles, you must enter the storage type to use for rook-ceph.CopyPerformance uses rook-ceph storage type of gp3 by default. Press **Enter** to use this or select another storage type:

Choose:

> gp3

io2 -

If you are using

Cost-Optimisedthe following is displayed:CopyCost-Optimised uses rook-ceph storage type of gp3. If you wish to change this please refer to the docs. -

Enter how much capacity you require for rook-ceph, if you press enter this uses the default of 100Gi.

Bash

CopySet how much capacity you require for rook-ceph, press Enter to use the default of 100Gi

Please note this is will be the usable storage with replication

> Enter rook-ceph disk space (default: 100) -

Enter the environment name which acts as an identifier for all resources.

Bash

CopySet environment name (Up to 8 character, can only contain lowercase letters and numbers)

> insightsNote

When you are deploying to an existing VPC, the following step is not required.

-

Enter IPs/Subnets in CIDR notation to allow access to the Bastion Host and VPN

Bash

CopySet Network CIDR that will be allowed VPN access as well as SSH access to the bastion host

For convenience, this is pre-populated with your public IP address (using command: curl -s ipinfo.io/ip).

To specify multiple CIDRs, use a comma-separated list (for example, 192.1.1.1/32,192.1.1.2/32). Do not include quotation marks around the input.

For unrestricted access, set to 0.0.0.0/0. Ensure your network team allows such access.

> 0.0.0.0/0 -

Enter IPs/Subnets in CIDR notation to allow HTTP/HTTPS access to the cluster's ingress.

Bash

CopySet Network CIDR that will be allowed HTTPS access

For convenience, this is pre-populated with your public IP address (using command: curl -s ipinfo.io/ip).

To specify multiple CIDRs, use a comma-separated list (for example, 192.1.1.1/32,192.1.1.2/32). Do not include quotation marks around the input.

For unrestricted access, set to 0.0.0.0/0. Ensure your network team allows such access.

> 0.0.0.0/0 -

SSL certificate Configuration

Bash

CopyChoose method for managing SSL certificates

----------------------------------------------

Existing Certificates: Requires the SSL certificate to be stored on a Kubernetes Secret on the same namespace where Insights is deployed.

Cert-Manager HTTP Validation: Issues Let's Encrypt Certificates; fully automated but requires unrestricted HTTP access to the cluster.

Choose:

> Existing Certificates

Cert-Manager HTTP Validation

Custom Tags

The config/default_tags.json file includes the tags that are applied to all resources. You can add your own tags in this file to customize your environment.

Deployment

To deploy the cluster and apply configuration, execute the following:

Note

A pre-deployment check will be performed before proceeding further. If the check fails, the script exits immediately to avoid deployment failures. You should resolve all issues before executing the command again.

This script executes a series of Terraform and custom commands and may take some time to run. If the command fails at any point due to network issues/timeouts you can execute again until it completes without errors. If the error is related with the Cloud Provider account, for example limits, you must resolve them first before executing the command again.

If any variable in the configuration file needs to be changed, the cluster must be destroyed first and then re-deployed.

For easier searching and filtering, the created resources are named/tagged using the aws-${ENV} prefix. For example, if the ENV is set to demo, all resource names/tags include the aws-demo prefix. An exception is the EKS Node Group EC2 instances as they use the Node Group name ("default"). If you have deployed multiple clusters, you can use the Cluster tag on the EC2 Instances Dashboard.

Cluster Access

To access the cluster, execute the following:

This command starts a shell session on a Docker container, generates a kubeconfig entry, and connects to the VPN. Once the command completes, you can manage the cluster through helm/kubectl.

Note

-

The

kxi-terraformdirectory on the host is mounted on the container on/terraform. Files and directories created while using this container will be persisted if they are created under/terraformdirectory even after the container is stopped. -

If other users require access to the cluster, they need to download and extract the artifact, build the Docker container and copy the

kxi-terraform.envfile as well as theterraform/aws/client.ovpnfile (generated during deployment) to their own extracted artifact directory on the same paths. Once these two files are copied, the above script can be used to access the cluster.

The following kubectl commands can be used to retrieve information about the installed components.

-

List Kubernetes Worker Nodes

Bash

Copykubectl get nodes -

List Kubernetes namespaces

Bash

Copykubectl get namespaces -

List cert-manager pods running on cert-manager namespace

Bash

Copykubectl get pods --namespace=cert-manager -

List nginx ingress controller pod running on ingress-nginx namespace

Bash

Copykubectl get pods --namespace=ingress-nginx -

List rook-ceph pods running on rook-ceph namespace

Bash

Copykubectl get pods --namespace=rook-ceph

DNS Configuration

Hostname

When deployingkdb Insights Enterprise you will need to configure a Hostname which you will use to access the application's User Interface. The Hostname should match a record you will create in your domain name system (DNS) service.

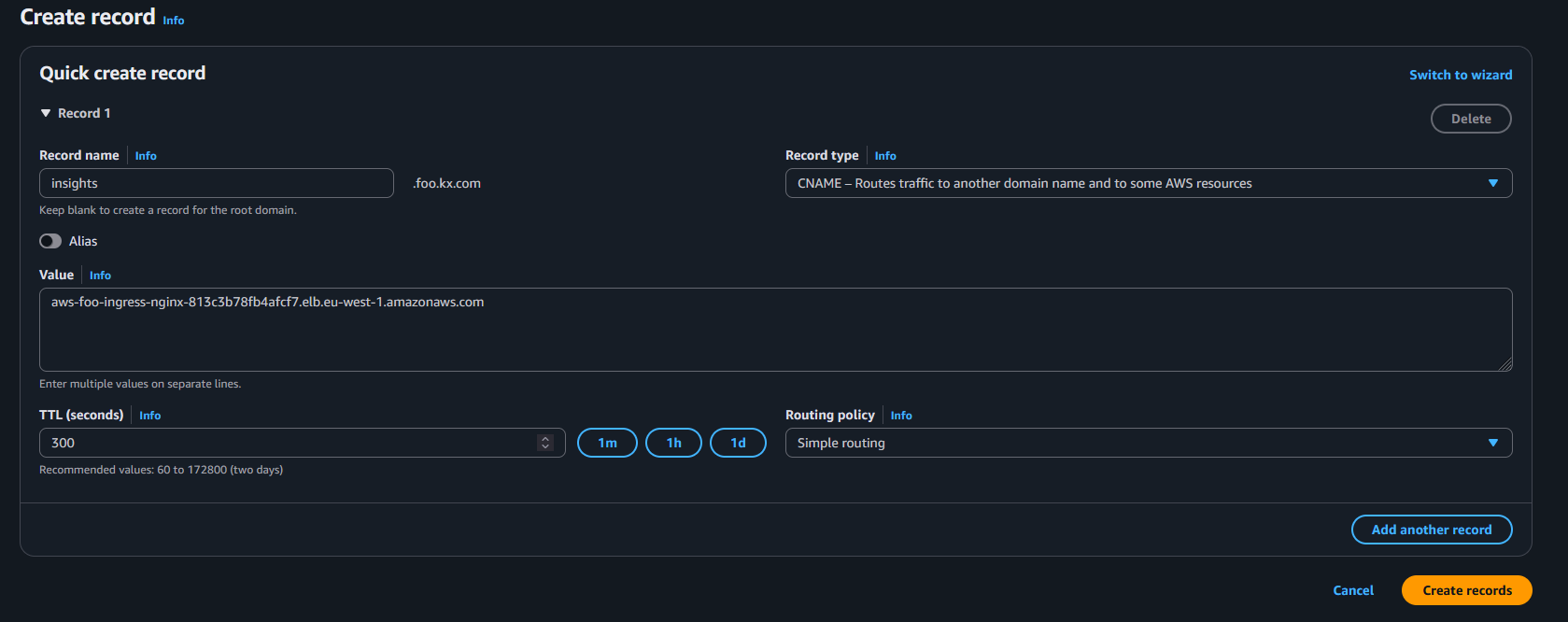

DNS Record

When creating your DNS record, the Record name should match the Hostname that you configured when deploying kdb Insights Enterprise (refer to the previous section), and the Value must be the name of the cluster's ingress LoadBalancer as described below. In AWS, the Record type must be set to CNAME.

You can get the cluster's ingress LoadBalancer's name by running the following command:

Bash

kubectl get svc -n ingress-nginx ingress-nginx-controller

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 172.20.92.195 aws-foo-ingress-nginx-813c3b78fb4afcf7.elb.eu-west-1.amazonaws.com 80:30182/TCP,443:32581/TCP 45m

Using the output above, create a CNAME record for your hostname which has the value aws-foo-ingress-nginx-813c3b78fb4afcf7.elb.eu-west-1.amazonaws.com.

For example, if your hostname was insights.foo.kx.com, you would create a record in AWS Route53 as in the screenshot below.

Ingress Certificate

The hostname used for your kdb Insights Enterprise deployment is required to be covered by a CA-signed certificate.

Note

Self-signed certificates are not supported.

The Terraform scripts support Existing Certificates and Cert-Manager with HTTP Validation.

Existing Certificate

You can generate a certificate for your chosen hostname and pass the cert.pem and cert.key files during the installation of kdb Insights Enterprise.

Cert-Manager with HTTP Validation

Another option for meeting the requirement of a CA-signed coverage is to use cert-manager and Let's Encrypt with HTTP validation. This feature can be enabled by selecting this option during the DNS configuration.

Note

This option introduces a security consideration, because Let's Encrypt must connect to your ingress to verify domain ownership, which necessitates unrestricted access to your ingress LoadBalancer.

Advanced Configuration

There are other automated approaches which are outside the scope of the Terraform scripts. One such approach is to use cert-manager and Let's Encrypt with DNS validation. This option can be configured to work with AWS Route53.

Next Steps

Once you have DNS configured and have chosen your approach to the Certification of your hostname, you can proceed to the kdb Insights Enterprise installation.

Environment Destroy

Before you destroy the environment, make sure you don't have any active Shell sessions on the Docker container. You can close the session by executing the following:

Bash

exit

To destroy the cluster, execute the following:

If the command fails at any point due to network issues/timeouts you can execute again until it completes without errors.

Note

-

In some cases, the command may fail due to the VPN being unavailable or AWS resources not cleaned up properly. To resolve this, delete

terraform/aws/client.ovpnfile and execute it again. -

Even after the cluster is destroyed, the disks created dynamically by the application may still be present and incur additional costs. To filter these disks on the EBS dashboard, the

kubernetes.io/cluster/aws-${ENV}tag needs to be added.

Uploading and Sharing Cluster Artifacts

To support collaboration, reproducibility, and environment recovery, this Terraform client script provides built-in functionality to upload key configuration artifacts to the cloud backend storage associated with your deployment. These artifacts allow other users or automation systems to connect to the environment securely and consistently.

What Gets Uploaded?

The following files are uploaded to your backend storage under the path ENV which is defined within kxi-terraform.env:

-

version.txt: Contains version metadata for the deployment. -

terraform/aws/client.ovpn: VPN configuration for secure access. -

kxi-terraform.env: The environment file with sensitive credentials removed.

When Are Files Uploaded?

The upload is automatically triggered at the end of the deployment process by:

Bash

./scripts/deploy-cluster.sh

The internal upload_artifacts function performs the upload to the following backend:

- S3 bucket (s3://${KX_STATE_BUCKET_NAME}/${ENV}/)

These files can then be downloaded by teammates or automation scripts to replicate access and configuration.

You can also run this command manually within the manage-cluster.sh script by running:

Bash

./scripts/terraform.sh upload-artifacts

Cleaning Up Artifacts

To ensure artifacts don’t persist unnecessarily in your backend storage, the system also supports automatic cleanup. These files are deleted at the end of the cluster teardown with the following command:

Bash

./scripts/destroy-cluster.sh

The cleanup is performed by the delete_uploaded_artifacts function and removes the same files from the corresponding ENV location in your backend (stored in kxi-terraform.env).

This keeps your backend clean and prevents the reuse of stale or outdated configuration files.

Advanced Configuration

You can further configure your cluster by editing the newly generated kxi-terraform.env file in the current directory. These edits must be made prior to running the deploy-cluster.sh script. The list of variables which can be edited are given below:

|

Environment Variable |

Details |

Default Value |

Possible Values |

|---|---|---|---|

|

TF_VAR_enable_metrics |

Enables forwarding of container metrics to AWS CloudWatch |

false |

true / false |

|

TF_VAR_enable_logging |

Enables forwarding of container logs to AWS CloudWatch |

false |

true / false |

|

TF_VAR_default_node_type |

Node type for default node pool |

Depends on profile |

EC2 Instance Type |

|

TF_VAR_rook_ceph_pool_node_type |

Node type for Rook-Ceph node pool (when configured) |

Depends on profile |

EC2 Instance Type |

|

TF_VAR_letsencrypt_account |

If you intend to use cert-manager to issue certificates, then you need to provide a valid email address if you wish to receive notifications related to certificate expiration |

email address |

|

|

TF_VAR_bastion_whitelist_ips |

The list of IPs/Subnets in CIDR notation that are allowed VPN/SSH access to the bastion host. |

N/A |

IP CIDRs |

|

TF_VAR_insights_whitelist_ips |

The list of IPs/Subnets in CIDR notation that are allowed HTTP/HTTPS access to the VPC |

N/A |

IP CIDRs |

|

TF_VAR_letsencrypt_enable_http_validation |

Enables issuing of Let's Encrypt certificates using cert-manager HTTP validation. This is disabled by default to allow only pre-existing certificates. |

false |

true / false |

|

TF_VAR_rook_ceph_storage_size |

Size of usable data provided by rook-ceph. |

100Gi |

XXXGi |

|

TF_VAR_enable_cert_manager |

Deploy Cert Manager |

true |

true / false |

|

TF_VAR_enable_ingress_nginx |

Deploy Ingress NGINX |

true |

true / false |

|

TF_VAR_enable_cluster_autoscaler |

Deploy AWS Cluster Autoscaler |

true |

true / false |

|

TF_VAR_enable_ebs_csi_driver |

Deploy EBS CSI Driver |

true |

true / false |

|

TF_VAR_enable_efs_csi_driver |

Deploy EFS CSI Driver |

true |

true / false |

|

TF_VAR_rook_ceph_mds_resources_memory_limit |

The default resource limit is 8Gi. You can override this to change the resource limit of the metadataServer of rook-ceph. Note The MDS Cache uses 50%, so with the default setting, the MDS Cache is set to 4Gi. |

8Gi |

XXGi |

Update whitelisted CIDRs

To modify the whitelisted CIDRs for HTTPS or SSH access, update the following variables in the kxi-terraform.env file:

HCL

# List of IPs or Subnets that will be allowed VPN access as well as SSH access

# to the bastion host for troubleshooting VPN issues.

TF_VAR_bastion_whitelist_ips=["192.168.0.1/32", "192.168.0.2/32"]

# List of IPs or Subnets that will be allowed HTTPS access

TF_VAR_insights_whitelist_ips=["192.168.0.1/32", "192.168.0.2/32"]Once you have updated these with the correct CIDRs, run the deploy script:

Note

You can specify up to three CIDRs, as this is the default limit imposed by the maximum number of allowed NACL rules. To use more than three, you must request a quota increase from AWS for the relevant account.

Existing VPC notes

If you are deploying to an existing VPC, you need to ensure that the public subnets that are used do not restrict traffic over http (80) and https (443) from the sources you wish to access insights from.